By Eric Ritter, CEO and President of Digital Neighbor

Browse the best articles, news and blogs on marketing engagement from the leading event provider in this space.

By Eric Ritter, CEO and President of Digital Neighbor

By Claudia Mastromauro, Vice President of Sales, Strategy & Operations in the UK for Aryel

An interview with Simon Daniels, Principal Analyst at Forrester

By Ian Gibbs, Director of Insight at the DMA

By Simon Daniels, Principal Analyst at Forrester Research

Customers in recent years have become increasingly more difficult to attract and retain. With...

By Fiona Passantino, Founder of Executive Storylines AI is one of the world’s fastest growing...

By Alexis Quintal, Owner & CEO of Rosarium PR & Marketing Collective

In 2024, it is no longer enough to just sell products or services; marketers must build genuine...

An interview with the Founder and Chief Strategist of Digital Delane

An interview with the winner of two categories in the 2023 Engage B2B Awards

An interview with the winners of the Best Multichannel Campaign

By Resa Gooding, Services Manager at HubSpot

An interview with the winner of two categories in the 2023 Engage B2B Awards

An interview with the winner of the Industry Trailblazers Under 30 award!

In December, people begin to reflect on the year that has passed and outline their goals for the...

Despite the cold weather and disruptive train strikes on the 6th of December, around 500 industry...

Find out how the 2023 winners impressed our judges this year.

A pre-event interview with the UK Marketing Director in Merchant Services at American Express

You can attend our final event of 2023 for free!

Almost one year on since ChatGPT took the world by storm, Nick Floyd, Head of Content at Catalyst...

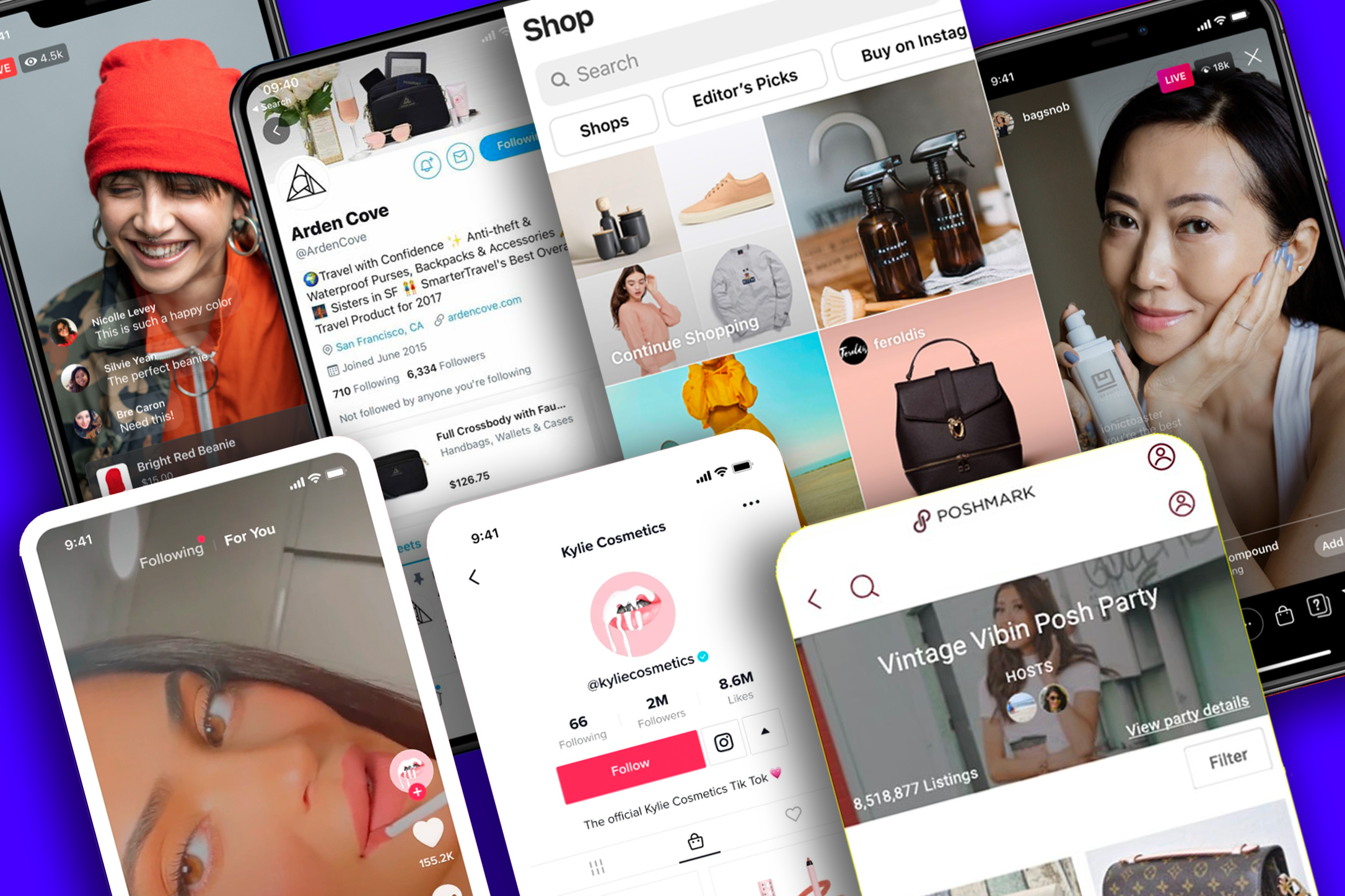

Claudia Mastromauro explores iCommerce, the fusion of influencer marketing and online shopping.

Kate Burnett, General Manager of the DMA's Talent division, discusses why a disproportionate number...

By Chantal Reed – Strategic Digital, Data Marketing Consultant and VP

An interview with the No 1 Global Marketing Thought Leader

To mark National Customer Service Week this year (October 2-6), we are looking back at the work we...

Thomas Peham, VP of Marketing at enterprise CMS Storyblok, looks at the new skills marketers will...

While Christmas may still be months away, many people have already started thinking about their...

We are delighted to announce the finalists of our 2023 Engage B2B Awards – the industry's only...

By Ian Gibbs, Director of Insight at The Data & Marketing Association (DMA UK)

An interview with the co-founder and CEO of Digital Exhaust

An interview with the Co-Founder and CMO of Content Monsta

Judging will commence on August 28th

The Data & Marketing Association’s (DMA UK) Director of Insight, Ian Gibbs, discusses the key...

An interview with the Founder and Director of Electric Peach

In many companies, the marketing and sales departments are often divided by an ocean of...

We are delighted to announce that the Engage B2B Awards entry deadline has been extended to August...

By Mimi Nicklin, Creative CEO at Freedm

An interview with Standard Chartered Bank’s Director of Social Media – Corporate Affairs, Brand and...

Since the birth of AI chatbots like ChatGPT and Bard, all anyone seems to talk about is how...

By Chantal Reed, Fractional CMO, Launch and Growth Marketing Advisor: Tech Innovation, SaaS and CX

Ian Gibbs, Director of Insight at the DMA, discusses why the Door Drop channel continues to perform...

Our customers and the buying process are constantly changing, becoming more and more complicated....

An interview with Imagen Insights’ Co-Founder

During March and April, Gartner surveyed 410 CMOs and marketing leaders from across different...

Neurodiversity Celebration Week (NCW) and the specialist psychological consultancy Lexxic Ltd...

By Chantal Reed, Fractional CMO, Launch and Growth Marketing Advisor: Tech Innovation, SaaS and CX

Have you submitted your entry for the 2023 Engage B2B Awards? If not, this is your sign to reflect...

An interview with the Founder of The Content Advisory (TCA) and the Chief Strategy Advisor of the...

Marketing industry trade body’s Director of Insight, Ian Gibbs, provides CMOs and marketers with...

In this interview, Louis Ross, UK Marketing Director – Merchant Services at American Express, talks...

As part of our Meet the Judges campaign, we are delighted to introduce the Executive Director of...

Today, as part of our Meet the Judges campaign, we are introducing the Founder of The B2B Marketing...

An interview with the Global Head of Social and Omnichannel at Standard Chartered Bank

What are the biggest challenges that marketers are facing today? How will the advancements in...

In December 2022, Caroline O’Keeffe and her team at The Happiness Index walked across the ballroom...

What skills do you need to be successful? How are the advancements in Artificial Intelligence...

By Chantal Reed, EMEA Growth Marketing Strategist

Last month, we launched the Meet the Judge campaign to present the experts who will be assessing...

Earlier this year, we held the 2023 Future of MarTech Conference, bringing together world-renowned...

Last month, we launched the Meet the Judge campaign to introduce the industry experts who will be...

Jennifer Shanks is a marketing and communications professional with over 14 years of experience in...

Ian Gibbs, Insight Director of the Data & Marketing Association (DMA UK) discusses why email...

We know that some companies may be hesitant to enter the 2023 Engage B2B Awards simply because they...

We’re excited to release our exclusive interview with B2B marketing expert Alan Davis, Head of...

Following a fantastic session at our Marketing Engagement Summit in December, it was great to sit...

In this interview, Bruno Singulani, Global Brand Identity & Design - Culinary Brands at Nestlé...

At the end of last week, I had the pleasure of sitting down with Jamie Mackenzie, Chief Marketing...

An interview with Malkit (Mal) Kaur, VP-Head of Demand Generation, Genesis Global

An interview with Emea Growth Marketing Strategist, Chantal Reed

To say that the past few years have been difficult for both organisations and individuals would be...

An interview with LinkedIn’s Growth Marketing Lead.

An interview with Oasis Investment Company’s Head of Strategy, Marketing & Transformation.

David van Schaick, CMO at The Marketing Practice

Last week, we held the 2023 Future of MarTech and SalesTech Conference at The Brewery in London....

An interview with the Sales and Marketing Operations Manager at The Happiness Index.

An interview with the Managing Director of Sales Expand Ltd.

An interview with Plum Guide’s Chief Brand Officer

By Tasha Wait, Digital Health Marketing Specialist

Last week, we held the 2023 Future of MarTech Conference at The Brewery in London. Taking place at...

To mark International Women’s Day this year, I have spent hours researching and reading the stories...

An interview with Moneypenny’s Group CEO

An interview with JLL’s Executive Director of Global Marketing

Marketing leaders must consider this change management best practice advice when tackling change...

On the 9th of March, Engage Business Media will hold its 2023 Future of MarTech Conference. This...

On Thursday the 9th of March, Engage Business Media will hold its 2023 Future of MarTech...

We are excited to share that there are just two weeks to go until our 2023 Future of Martech...

An interview with the CEO and Founder of BPerfect Cosmetics

What tools should marketers equip themselves with for long-term success?

Account-based marketing (ABM) is a B2B marketing strategy that allows marketers to build stronger...

At the end of last year, the integrated education and sales group LTE Group received the Best...

Transforming your business and company culture can be a strenuous but rewarding task. Ethos Energy...

We are delighted to reveal that Rachel Aldighieri, Managing Director of the Data & Marketing...

Gallup’s annual “State of The Global Workplace Report” covers 140 countries in an array of topics,...

We are thrilled to announce that entries for the 2023 Engage B2B Awards are now open!

In 2019, The Happiness Index had no marketing strategy. In December 2022, it became the winner of...

In December, Engage Business Media held the 2022 Sales and Marketing Engagement Summits at the...

Before the pandemic, most events took place in person and followed a clear structure. Then, the...

At the annual Marketing Engagement Summit, we had the privilege of kicking off the day hearing from...

How can sales and marketing teams work in unison?

We are proud to announce that Engage Business Media has raised £2,327.50 for Cancer Research. This...

The first edition of the Engage B2B Awards took place on December 8th at the Victoria Park Plaza in...

Despite the cold weather and train delays, over 300 people made their way to the Marketing...

On the 8th of December, more than 200 people attended the Engage B2B Awards at the Victoria Park...

So-sure explains how it has broken the mould in the realm of insurance and developed a brand based...

Searcys explains how, 172 years after the brand was founded, it continues to progress and develop...

Twitter explains how it has transformed the way in which brands and customers can communicate with...

This was a first: a Marketing get together, more about real world use cases than agency...

Oxfam, a globally renown aid and development agency founded in 1942, explains how it has recently...

Being confined to home during the coronavirus crisis resulted in many people turning to crafts and...

Costa Coffee has reported a fall in sales at its cafes as a result of fewer people visiting UK High...

Rolls-Royce Motor Cars has renewed its commitment to Britain after a double-digit jump in UK...

Sign-up for the Engage Martech Newsletter.